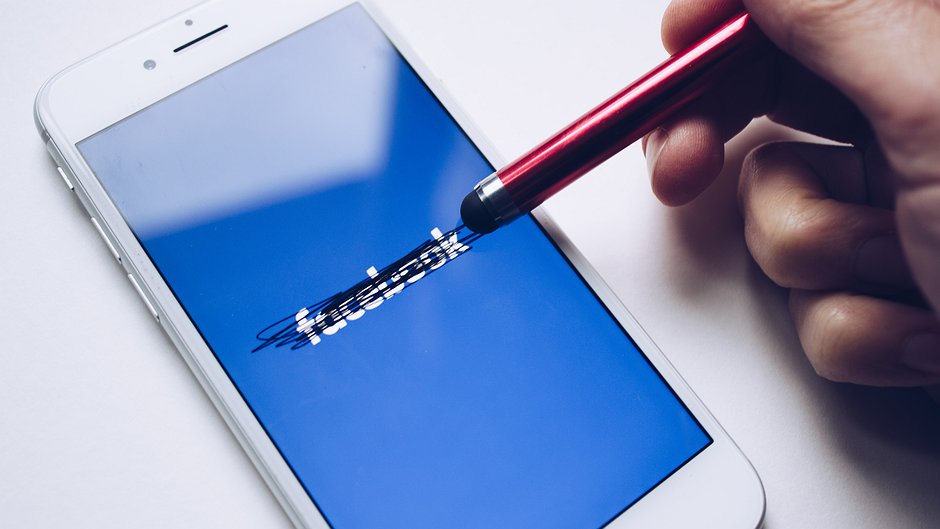

Delete Facebook? Not just yet

By Dia Kayyali, WITNESS Program Manager, Tech+Advocacy

Click here to view original article.

In light of the scandal about Facebook during the last few weeks, we at WITNESS think it’s important to make something clear: Facebook has responsibilities to its users, and ultimately all those affected by its product. Facebook has responsibilities around how it handles user data and user content. It’s time for Facebook to be more transparent, to provide more due process, and give users more control. It’s time for Facebook to uphold human rights. It’s not time for WITNESS to delete Facebook—yet. But it’s clear something needs to change.

In the wake of the Cambridge Analytica scandal, and as the changes to European Union privacy law known as General Data Protection Regulation (GDPR) approach, it’s good to see Facebook’s irresponsible data practices finally getting the public scrutiny they deserve. But it’s equally essential that the role of Facebook as a forum for expression not be forgotten. For many people around the world, Facebook isn’t just the primary way that they connect and share information, it is THE INTERNET, and Facebook seems intent on expanding its territory as quickly as possible, constantly acquiring other companies like WhatsApp and launching new products. Facebook content, from propaganda videos to posts from citizen journalists, is increasingly shaping the world. Telling users to delete Facebook ignores the fact that doing so is a privilege that not all people can afford.

That’s why WITNESS continues to push for a better Facebook through our Tech + Advocacy program. As always when we see an issue that affects the people we work with, we’ve got some recommendations for Facebook:

WHAT NEEDS TO CHANGE? MORE TRANSPARENCY AND MORE (DUE) PROCESS

At the most fundamental level, Facebook needs to be more transparent about data practices and content regulation. When it comes to sharing user data, Facebook needs to start by implementing the “affirmative express consent” requirements of a 2011 settlement with the Federal Trade Commission—the GDPR also contains clear requirements on consent. Facebook must ensure that users can understand how their data, from location information to sensitive information about medical conditions determined through their likes, could be shared. This includes informing users of how other users could expose or share their data, as was the case in the Cambridge Analytica leak (in that case, users who took a quiz shared information about their friends as well..)

In the wake of the Cambridge Analytica story, blogs and newspapers published dozens of articles with step-by-step instructions on how to control user data. But this shouldn’t be necessary. Instead, Facebook should take steps like creating plain language about their practices and all potential avenues of data sharing and use, making it physically easy to read on the site, and putting it in every user’s feed for review.

When it comes to content, the site needs to start being clear about what exactly it is taking down and why. At a macro level, the company should include information on takedowns in transparency reports. At the micro level, it needs to provide users with notices and communications that are specific and easy to understand. WITNESS knows this from the many hours we have spent working with users who do not understand why their content was taken down. They receive notices that give them little to no information. For example, Palestinian activists sharing hundreds of videos of human rights abuses on their accounts could have their entire profiles suspended because of only 2-3 videos. Exactly how many violations would lead to account suspension isn’t clear, and activists won’t get a specific warning that their account is about the get shut down. Instead of explaining exactly how videos have violated the standards, Facebook will simply send a generic statement saying content violated community standards because of violent and graphic content. Sometimes, it feels like someone at Facebook is just flipping a coin.

But unfortunately, what is often happening is that a powerful government is working to silence activists on the platform through their contacts at Facebook. That’s why a major part of transparency for Facebook is that the company needs to be clear on what its relationship is with governments, especially those governments that manipulate the company’s rules to silence activists on the platform. Instead of defensive denials about their meetings with government officials, the company should publish statements about who they meet with and what they discuss.

Facebook also needs better processes around both content regulation and use of data. For content regulation, the platform should provide clearer processes for users who want to appeal a decision like an account termination or removal of a piece of content. These processes should provide some basic due process for Facebook users facing Facebook decisions, including proper notice of violations and evidence for violations, the chance for users to provide their own evidence, and a clear written explanation for the results of such a process.

Similarly, users deserve more process when it comes to their data. Under the GDPR, Facebook will have to hire a “Data Protection Officer”, and users “may contact the data protection officer with regard to all issues related to processing of their personal data and to the exercise of their rights under [the GDPR].” Facebook should provide DPOs for everyone, not just EU citizens. Facebook should also provide better notifications about breaches and warnings about actions it plans on taking—such as the change to WhatsApp terms of service that allowed data sharing between WhatsApp and Facebook. Lastly, Facebook should provide all users with the ability to easily access the information the platform has about them, including information from other services like WhatsApp. Currently, users can go in to Facebook settings and see some of this information, such as likes the company is using for advertising purposes. But users wouldn’t be able to see and delete, for example, a list of all locations Facebook has ever logged them at.

“IF YOU DON’T LIKE IT, JUST LEAVE”

WITNESS partners with and educates human rights defenders all over the world. And all over the world, those human rights defenders are using Facebook and its products to organize, communicate, and educate. For example, as state violence and brutal political repression surges in Brazil, activists and residents of favelas targeted by this violence continue to use Facebook as a news service, method of sharing files, alert system for information about violence and other urgent news, and a place to share “know your rights” information. And as we know from our regional work in Meso and South America, Africa, the Middle East/North Africa, and Southeast Asia, when activists aren’t using Facebook, they’re likely to use WhatsApp to share incredibly sensitive media, oftentimes captured in places like Syria where there are no ordinary journalists.

It’s easy for people in positions of privilege—especially those with easy access to many forms of communication, those with economic privilege, and those who aren’t facing serious human rights abuses on a daily basis—to excuse Facebook’s behavior by saying something along the lines of “Facebook is a business providing a free service. Its business model depends on what might seem like the unethical use of user data to you—but that use is legal. If people don’t like it, they shouldn’t use the platform.”

The economic and Internet access component to deleting Facebook is clear. Telling people to get off of Facebook doesn’t take into account mobile service packages that have special Facebook and WhatsApp data plans that can save people money. It also doesn’t take into account that Facebook often works where other services and products continue to fail- especially in places with poor Internet connections.

But WITNESS knows better. It would be easy to write thousands of words about Facebook’s problems, and many of our fellow civil society organization have. We have also warned about the privacy, security, and expression problems presented by Facebook and associated tools. But the fact is that human rights defenders are using Facebook, and will continue even if we advise them not to (which would be irresponsible, for the reasons listed above).

We support efforts to develop and make truly usable alternatives to major platforms. But in the meantime, we will continue to fight for a Facebook that respects, protects, and supports human rights defenders, as we have been doing through our Tech + Advocacy program. We will keep a close eye on policy and legal developments, and we will join the fight where appropriate.

We know that the decisions Facebook and other platforms make have a huge impact on people’s lives, online and offline, for better or for worse. That is why our Tech + Advocacy work is more important now than ever. It isn’t just people’s privacy at stake, it’s also their physical safety, their right to assemble, and their freedom of expression.

Dia Kayyali coordinates WITNESS’ tech + advocacy work, engaging with technology companies and working on tools and policies that help human rights advocates safely, securely and ethically document human rights abuses and expose them to the world. Check out WITNESS' free courses on Advocacy Assembly today.

Related courses

90 mins

School of Data

School of Data

90 mins

School of Data

School of Data Rory Peck Trust

Rory Peck Trust

50 mins

Rory Peck Trust

Rory Peck Trust

Blogs

6 useful resources for journalists covering Covid-19

With a global pandemic spreading throughout the world, journalists are under increasing pressure to report accurate and relevant news for the masses. Often when covering a crisis, those on the reporting frontlines compromise their physical safety and mental health. To show some solidarity, the Advocacy Assembly team curated a list of useful resources from other organisations leading the way on this.

5 ways to find data for your next story

Data journalism is fast becoming a big trend in newsrooms across the globe. However, data isn’t always so easy to find. Here are five ways to get data for your next article.